Industrial AI is widely recognized as a key enabler of smart manufacturing—supporting predictive maintenance, process optimization, energy efficiency, quality control, and safer operations. Yet despite years of investment, most industrial AI initiatives fail to scale beyond pilot deployments. The problem is not lack of data, models, or ambition. It is the way industrial data architectures are assembled.

In early-stage pilots, AI often appears successful. Models perform well. Dashboards look promising. Initial insights are generated. But pilots operate under controlled conditions. Scope is limited. Data pipelines are carefully curated. Manual intervention compensates for architectural gaps.

When organizations attempt to extend these solutions across additional assets, lines, or plants, the underlying weaknesses become apparent. Integrations become brittle. Data behaves differently from site to site. Engineering effort grows faster than business value.

This is where most industrial AI projects stall.

Industrial environments generate vast volumes of data, but availability does not equal usability.

The Core Challenge: Data Availability

AI thrives on data. But in manufacturing, data is often:

Locked in silos across disparate business systems (ERP, MES, SCADA, Historian, PLM, CMMS, EAM, PLCs, Controllers etc.)

Inconsistent across volume, variety & velocity.

Difficult to access due to legacy infrastructure, proprietary protocols,

Sensitive, raising security and privacy concerns.

Manufacturing data is distributed across PLCs, controllers, historians, SCADA systems, MES platforms, quality systems, and enterprise applications. Each system has its own data models, semantics, update frequencies, and operational constraints.

Even within a single plant, the same signal or KPI may be defined differently depending on the system producing it.

As a result, AI models are often trained and deployed on data that is inconsistent, poorly contextualized, or difficult to govern. Over time, this inconsistency erodes model reliability and operator trust.

Industrial AI does not fail because data is missing. It fails because data is fragmented and inconsistent.

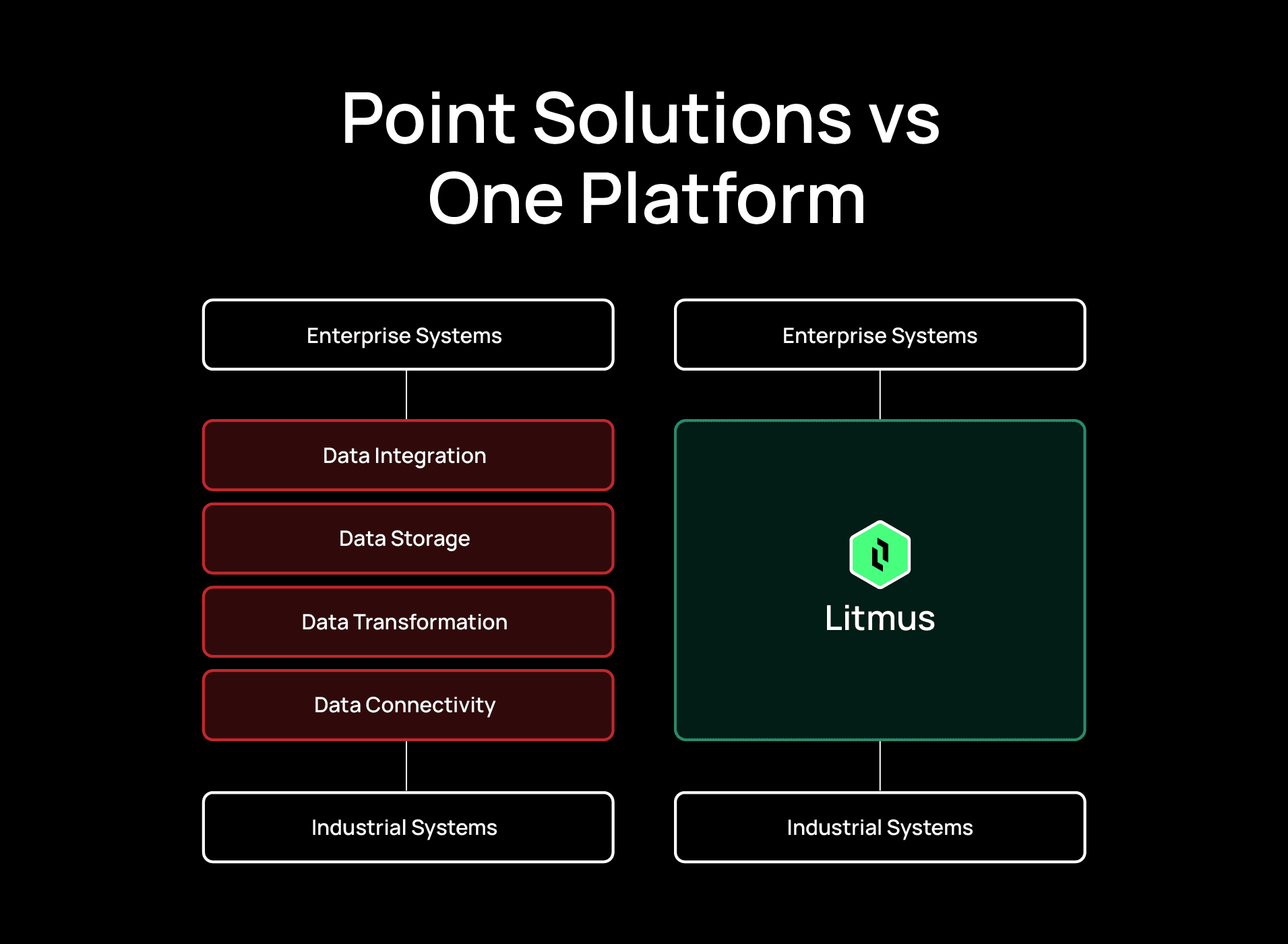

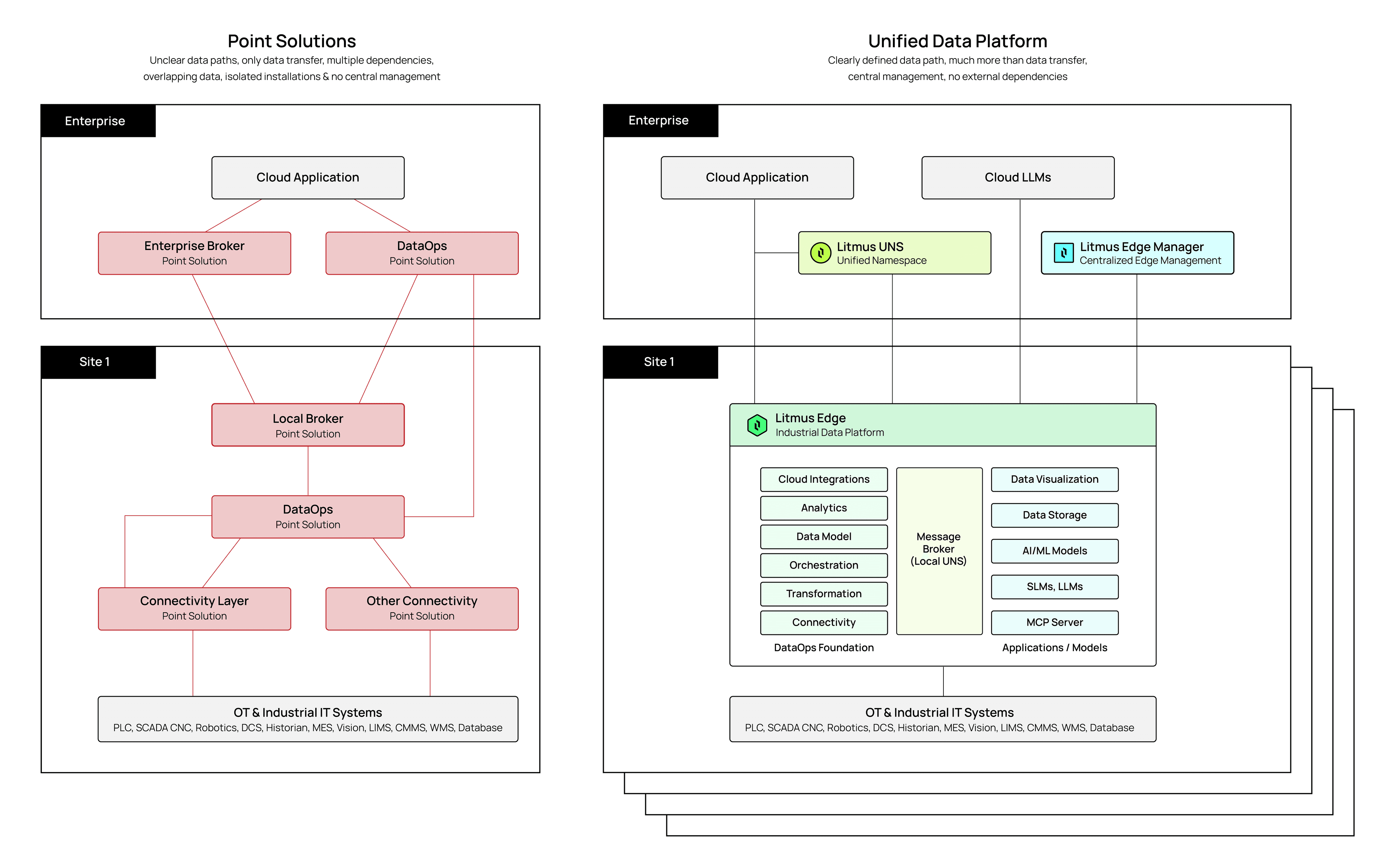

To move quickly, many manufacturers adopt a technology-first approach to AI pilots. They assemble collections of point solutions—tools for connectivity, data transformation, messaging, orchestration, visualization, and analytics—and integrate them through custom code.

Each tool performs its intended function well. Together, they can demonstrate technical feasibility. However, these stacks are optimized for experimentation, not long-term operation.

Each component maintains its own data lifecycle. Integrations are tightly coupled and sensitive to change. Scaling requires repeating the same engineering work at every site. Governance, security, and monitoring are fragmented across tools and teams.

Projects stall due to:

No direct plant operations business benefits

Lack of scalability and missing enterprise vision

Poor data governance and no unified central management

Point solution tools fail to support industrial AI at scale, not because they are ineffective tools, but because they introduce structural limitations as systems grow.

High Total Cost of Ownership (TCO): Multiple licenses, redundant pipelines, and increased IT overhead.

Vendor Lock-In: Fragile integrations that break with version updates.

Siloed Data: No unified view, making governance and analytics nearly impossible.

As deployments expand, several constraints become unavoidable:

Latency: Data must travel through multiple systems—and often to centralized infrastructure—before action can be taken. In live production environments, this delay is unacceptable.

Reliability: Industrial networks are not guaranteed. When AI workflows depend on continuous connectivity, execution breaks during outages or maintenance windows.

Cost: High-frequency sensor data and vision streams drive unpredictable bandwidth and compute costs when routed through centralized pipelines.

Context loss: Centralized models lack continuous awareness of local machine states, operating conditions, and historical nuance, reducing accuracy and trust.

These challenges may be manageable in isolated pilots. At scale, they compound, making AI systems brittle, expensive, and difficult to operationalize.

Across the system lifecycle, fragmentation increases risk:

Design Phase: Fragmented architecture, inconsistent data models, and complex integration planning.

Deployment Phase: Multiple runtimes, security gaps, and edge-cloud coordination issues.

Scaling Phase: Repeated work at every site, lack of centralized monitoring, and training overhead.

Maintenance Phase: Multiple vendors, version mismatches, and growing technical debt.

The result is predictable: unclear ROI, rising costs, and stalled AI initiatives.

A unified industrial data platform addresses these challenges by design. Instead of stitching together disconnected tools, unification provides a single architectural foundation for industrial data—covering connectivity, contextualization, orchestration, governance, and execution as a coherent system.

This approach enables:

End-to-end data flow for consistent data models across assets, lines, and plants

AI-ready data, contextualized data pipelines

Centralized governance without sacrificing local execution

Faster deployment and lower TCO and operational overhead, with reduced integration time and cost

Most importantly, unified platforms support execution-grade AI, where intelligence operates reliably in real production environments, not just in dashboards. With a unified approach, manufacturers can move from fragmented pilots to enterprise-wide transformation.

Litmus delivers a Unified Industrial Data Platform purpose-built for industrial environments. It enables manufacturers to connect heterogeneous OT and IT systems, contextualize data consistently, orchestrate real-time pipelines, and execute AI workloads at the edge—while maintaining centralized visibility and control across sites.

Rather than replacing individual tools, Litmus replaces fragmentation with:

Universal Connectivity: Rapid integration with any OT or IT system.

Contextualization & Orchestration: Transform raw data into AI-ready formats.

Centralized Management: Secure, role-based control across multiple sites.

AI Integration: Native support for LLMs, SLMs running at edge and real-time model interaction via MCP server.

Litmus enables a phased approach:

- 1.

Discovery: Assess current state and existing point solutions.

- 2.

Realization: Build unified architecture and pilot implementation.

- 3.

Scale: Roll out across sites with centralized management.

Point solutions may get you started, but they won’t help you scale. As manufacturers move beyond pilots, architectural decisions become decisive. A unified industrial data platform provides the foundation required to turn AI from isolated experiments into durable, enterprise-wide capability. Industrial AI does not scale on tools. It scales on architecture.