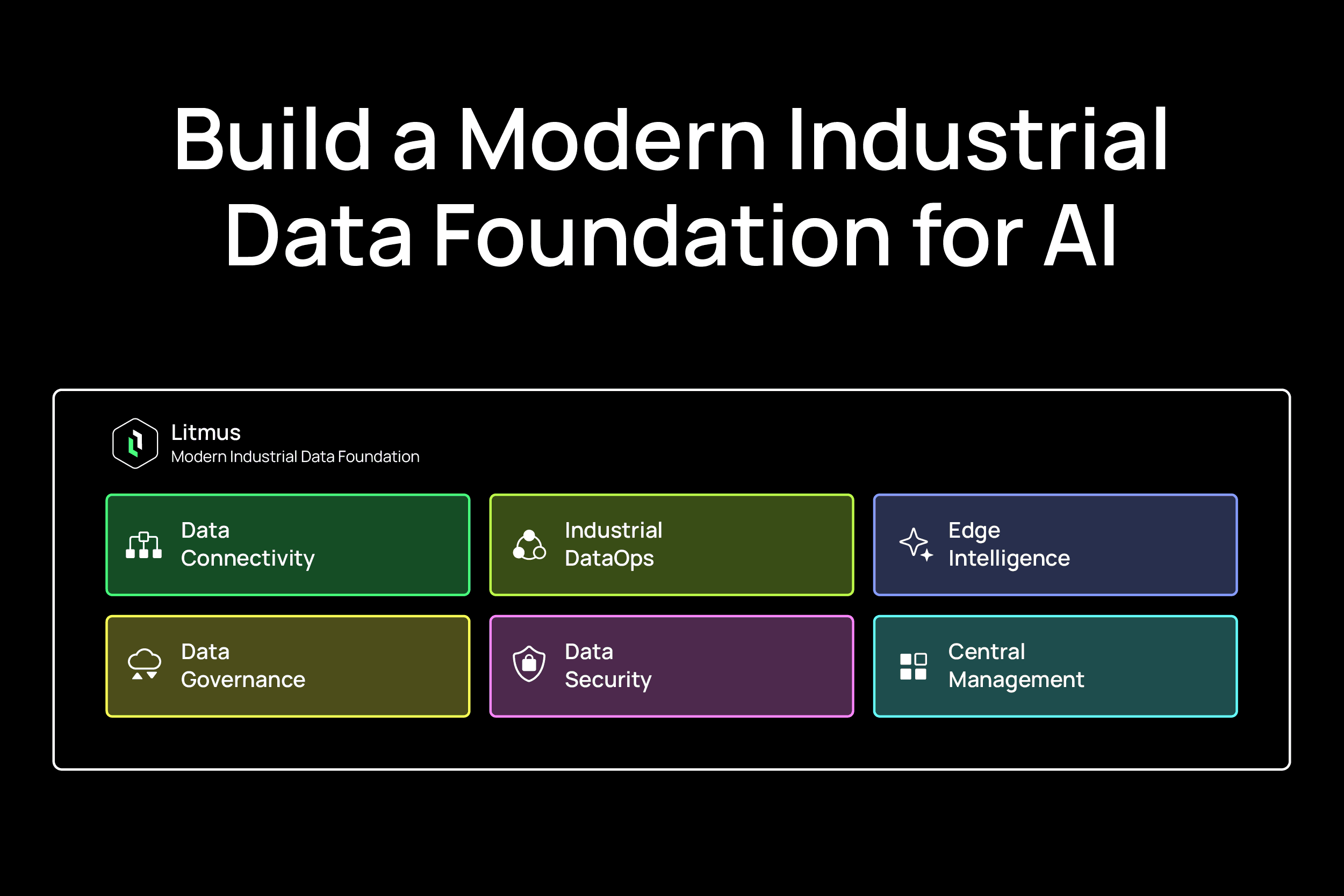

Industrial AI succeeds when OT and IT operate from the same, trusted data backbone. The fastest path is a modern foundation that unifies machine, process, and enterprise data via broad connectivity, a Unified Namespace, disciplined DataOps, and governance—so models can be trained in the cloud and executed safely at the edge. If you’re evaluating the “best” partner for OT/IT data integration and protocol translation, look for vendor-neutral connectivity at scale, a robust UNS, and cloud-native, secure integration. Litmus exemplifies this approach with extensive driver coverage, a Unified Namespace architecture, and embedded edge AI that minimizes custom code, accelerating time-to-value for predictive maintenance, quality, and throughput initiatives.

An AI-first data strategy aligns analytics and machine learning with concrete business outcomes—reliability, yield, energy, and cost per unit—before any tooling. Start by prioritizing a small set of industrial AI use cases that matter most (for example, predictive maintenance for bottleneck assets or vision-based quality inspection on high-volume lines) and quantify expected improvements in OEE, scrap, MTBF, or energy intensity. Establish governance and measurement from day one so leadership, OT, and IT are aligned on targets, data requirements, and success criteria.

As you scope pilot domains, emphasize semantic clarity and trust. One pragmatic playbook is to “start with intelligent data modeling for semantic clarity, add automated trust scoring, and focus on authoritative sources, basic classification, accessible pipelines, and observability for pilot domains”. That discipline keeps efforts focused on value while preventing technical debt.

Use Case | Typical Data | Expected Outcomes |

Predictive Maintenance | Vibration, current, temperature, event logs | Fewer unplanned stops, higher MTBF, lower maintenance cost |

Vision-Based Quality Inspection | Images/video, PLC tags, reject codes | Scrap reduction, faster root cause, higher first-pass yield |

Throughput and Bottleneck Analysis | Cycle times, queue lengths, OEE tags | Increased output, reduced WIP, balanced lines |

Energy Optimization | Power meters, machine states, production mix | Lower energy per unit, demand charge avoidance |

Process Anomaly Detection | Sensor time series, setpoints, alarms | Early fault detection, fewer minor stops |

SPC and Parameter Tuning | Historian data, MES lots, recipe parameters | Tighter variability, higher process capability |

OT/IT data integration starts with connecting PLCs, CNCs, robots, sensors, and historians—regardless of vendor or vintage—into one platform, then organizing that data with a Unified Namespace. A UNS serves as a contextual hub and single source of truth where machines, lines, sites, and enterprises share a common semantic structure that IT systems and AI applications can consume consistently.

Deploy industrial brokers that normalize protocols and metadata into the UNS while accommodating both legacy and modern assets. At scale, extensive pre-built protocol coverage (hundreds of drivers across OPC UA, Modbus, Siemens S7, Rockwell CIP, MTConnect, MQTT Sparkplug, and more) reduces integration friction and future-proofs connectivity for new assets. This evolution moves you from a brittle OT/IT pyramid to a modern factory data hub with publish/subscribe interoperability and composable data products.

Prioritize wide driver coverage and native protocol translation with buffering and store-and-forward

Require a production-grade UNS with rich context, versioning, and namespace governance

Demand secure, cloud-native integration (role-based access, tokenization, TLS, zero trust)

Validate edge AI and low-code pipelines to minimize custom development

Ensure multi-site scalability and lifecycle management of gateways and namespaces

Dimension | Legacy Protocol Gateways | UNS-Based Factory Data Hub |

Data Model | Point-to-point tags, siloed | Shared, contextual model (assets, lines, sites) |

Scalability | Fragile as connections grow | Pub/sub scale with standardized topics |

Change Management | Vendor-specific, manual | Central namespace versioning and CI/CD |

AI Readiness | Limited context and lineage | Rich semantics, lineage, time sync, quality flags |

Resilience | Minimal buffering | Edge buffering, store-and-forward, retry policies |

Security | Mixed, device by device | Centralized policies, identities, and least privilege |

Litmus provides extensive device connectivity, a robust UNS, and secure integration to IT and cloud tooling—helping teams standardize OT data quickly without custom code.

Hybrid architectures keep time-critical inference close to machines while leveraging elastic cloud resources for model training and fleet analytics. Edge computing executes sub-second inference, simple control logic, and local HMI apps where latency and safety matter. Cloud computing handles large-scale feature engineering, model retraining, data cataloging, MLOps, and multi-site analytics. For large factories, blending on-premises compute with cloud services reduces latency and cost while enabling central governance and reuse.

Edge: real-time signal processing, anomaly scoring, vision inference, buffering, protocol translation, HMI/SCADA integrations, UNS publication

Cloud: model training and retraining, feature stores, enterprise data lakes/warehouses, cross-site analytics, centralized governance, lineage, and ML registries

- 1.

Discover assets and drivers;

- 2.

Connect and buffer OT signals;

- 3.

Normalize into a UNS;

- 4.

Stream to edge pipelines for filtering/feature creation;

- 5.

Persist to Bronze storage;

- 6.

Promote to Silver with cleaning and context;

- 7.

Aggregate to Gold for KPIs and AI features;

- 8.

Train models in the cloud;

- 9.

Register and deploy to edge;

- 10.

Monitor performance and data drift;

- 11.

Automate updates via CI/CD.

Containerized applications (Docker/Kubernetes) and secure connectors to AWS, Azure, and Google Cloud are best practices for portability and policy enforcement.

DataOps brings software engineering discipline to data: automated ingestion, testing, orchestration, and monitoring that improve reliability and speed. A medallion architecture structures pipelines into progressive quality layers—Bronze for raw, immutable data; Silver for cleaned, conformed, and enriched records; Gold for aggregated and trusted datasets that power analytics and AI. This pattern is widely used in industrial AI reference architectures to streamline data validation and model readiness.

Bronze: raw sensor time series, PLC tags, events, images stored with lineage and timestamps

Silver: cleaned signals with units, calibrated values, asset hierarchy, joins to MES lots or work orders—ideal for predictive maintenance features and SPC

Gold: trusted, aggregated tables (for example, shift-level OEE or golden signals for bottleneck assets) that drive real-time dashboards and KPI alerts

Automate ingestion, transformation, and orchestration for reliability—modern data stacks emphasize tested pipelines and data quality monitoring to reduce breakage and speed iteration.

Source | Bronze (raw) | Silver (clean/enriched) | Gold (trusted/aggregated) |

PLC/SCADA tags | Time-stamped tag values | Units, scaling, asset context | Line/shift KPIs, alarms by asset |

Vibration sensors | Raw waveforms | Features (RMS, kurtosis), health scores | Asset risk indices, maintenance windows |

Vision cameras | Frames/blobs | Labeled defects, bounding boxes | Per-lot defect rates, root-cause features |

MES/ERP | Events, work orders | Conformed joins to assets and time | Throughput, WIP, schedule adherence |

Energy meters | Interval kWh, kW | Weather/production normalization | Energy per unit, demand charges |

Trust and traceability are non-negotiable in regulated industrial environments. Data governance defines the policies and processes to ensure accuracy, lineage, privacy, and compliance across the lifecycle. Data observability automates monitoring of data health, lineage integrity, anomaly detection, and quality thresholds so issues surface before models or dashboards regress. Build a durable framework that emphasizes quality and umbrella policies. A practical approach catalogs all datasets, establishes end-to-end lineage, automates validation tests, and applies PII controls where enterprise data is combined with OT signals.

Central data catalog with business and technical metadata

End-to-end lineage across edge, UNS, pipelines, and ML models

Schema and drift detection with alerting

Data quality rules and automated validation at each medallion layer

Role-based access control, secrets management, and audit logs

Encryption in transit and at rest, tokenization where appropriate

Policy as code and change management workflows

A robust ML lifecycle connects your data foundation to value in production. The core stages are: data labeling, model training and validation, registration, deployment to edge and/or cloud, and continuous monitoring with feedback loops. Deploying models to the edge near equipment enables ultra-low-latency inference for control and safety, while cloud endpoints support fleet analytics and decision support. Maintain lineage and auditability by linking training data, code versions, features, and model artifacts to production predictions—an approach consistent with enterprise MLOps guidance. Monitor model performance, data drift, and concept drift; trigger retraining or rollback via automated policies.

- 1.

Curate and label data from Silver/Gold layers;

- 2.

Train and validate models with reproducible pipelines;

- 3.

Register models with metadata and approval gates;

- 4.

Containerize and deploy to edge gateways or cloud endpoints;

- 5.

Monitor accuracy, latency, drift, and data quality;

- 6.

Automate retraining, A/B tests, and safe rollback;

- 7.

Document lineage and compliance artifacts.

Operationalization means turning validated analytics and models into live workflows that touch machines, people, and systems. Automate data and ML pipelines into MES, CMMS, QMS, and notification so teams act in the flow of work. Modern data stacks combine transforms, analytics, and operational delivery to turn raw signals into action reliably. Start with focused pilots that leverage existing data to prove ROI quickly—industrial case studies show targeted deployments can reduce defects and downtime within weeks when scoped well.

A pilot-to-scale roadmap keeps momentum while hardening architecture and governance:

Prioritize use case, define success metrics, map data sources

Connect assets, stand up UNS, build Bronze/Silver pipelines

Train model, validate, deploy to a single cell/line

Measure impact, document lessons, harden security/governance

Replicate to additional lines/sites, automate CI/CD and monitoring

Expand domain data products, refine models, and scale integrations

Containerized or plug-and-play edge deployments like Litmus reduce change-management friction and speed rollouts across heterogeneous plants.

Sustained impact requires cross-functional collaboration and domain data products—curated, reusable datasets and models owned by business or engineering teams. Decentralizing ownership with self-service analytics and low-code tools accelerates iteration and reduces bottlenecks on central IT while preserving shared governance.

Operations and maintenance: contextual data literacy, root-cause analysis with AI-powered dashboards, digital work instructions

Controls and OT engineers: secure connectivity, UNS modeling, edge deployment and monitoring

Data engineers: streaming ingestion, medallion pipelines, orchestration, and observability

Data scientists/ML engineers: feature stores, MLOps, edge inference optimization

Product/line leaders: value framing, KPI design, pilot governance, and change management

For examples of domain-led transformation and self-service operating models, see these industrial transformation success stories.

Start by defining clear AI use cases, then connect OT sources, standardize with a Unified Namespace, implement a hybrid edge/cloud architecture, build medallion-based DataOps pipelines, and layer in governance with continuous monitoring.

Adopt a architecture to connect, clean, enrich, contextualize and validate data, add automated lineage, and enforce governance policies that maintain accuracy and compliance end to end.

A UNS provides a single, contextual source of truth that standardizes industrial data, simplifying integrations and powering reliable, scalable AI applications.

End-to-end lineage, automated data quality and anomaly detection, role-based access with policy enforcement, and active monitoring ensure trust, compliance, and repeatability at scale.

Launch focused pilots using existing data to prove ROI quickly, then scale successful patterns—connectivity, UNS, pipelines, and governance—across lines and sites with central management system.